We spent (rightly, it turns out) two years worrying about AI replacing people. Cute.

The more immediate problem is that every department is now creating its own little digital goblins. Marketing agents. Finance agents. Procurement agents. HR agents. Sales agents. Shadow agents. Personal agents. Agents built by interns. Agents built by consultants. Agents built by people who still name Excel files “final_final_v7_REAL.xlsx”.

That is where this gets funny… I obviously mean structurally dangerous, budgetarily stupid, legally foggy, operationally fragile and spiritually exhausting (I have indeed enjoyed cadence-ing this sentence). The usual not really-well-thought-over corporate innovation cocktail, then. Served luke warm in a cheap reusable cup with a “responsible AI” sticker on it.

For a while, we imagined artificial intelligence entering the enterprise with a loud marching band through the front door. A big platform, a strategic roadmap, a steering committee, a sober executive sponsor standing in front of a slide called “AI North Star” (which is usually where good ideas go to be embalmed.) The board would ask its important questions (of course). Procurement would negotiate the license. Legal would squint at the terms. IT would build the control layer. HR would organize a lunch-and-learn where people pretend to understand embeddings while eating sandwiches with too much mayonnaise.

Adorable.

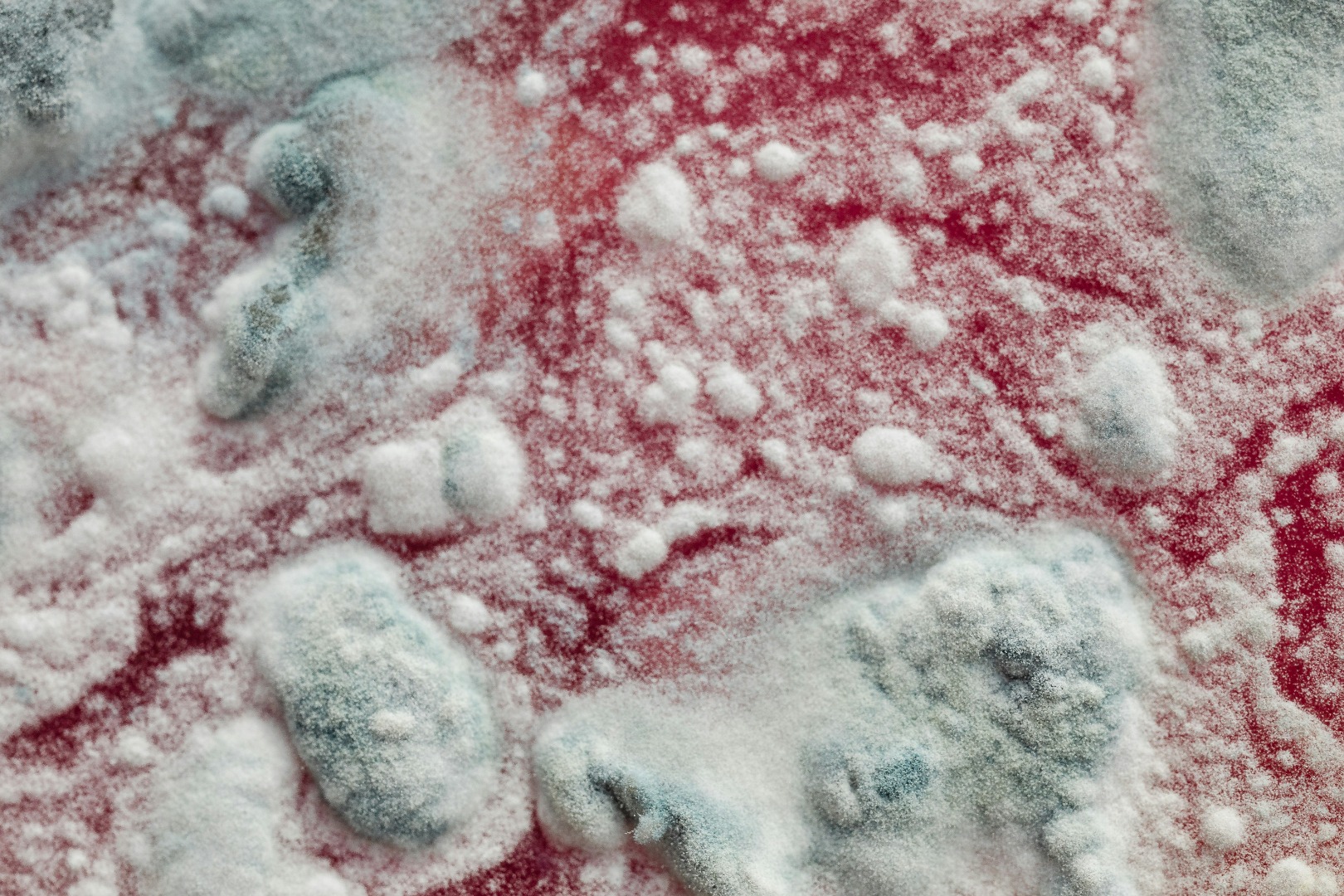

If you ask me, AI will enter the company as mould. Quietly. Everywhere. In corners. In workflows. In browser extensions. In forgotten SaaS tools. In sales teams, in finance, in HR, in procurement. Even in that one over-caffeinated person who discovered an agent that reconciles invoices and now speaks about it with the dangerous glow of a overzealous convert.

Someone in finance will build an agent because the official process still involves six approvals, three systems and a shared mailbox last cleaned during the Obama administration (you know, that erudite guy well before Trump). Someone in sales will build an agent that writes proposals, updates CRM fields and “improves” pipeline notes with the confidence of a LinkedIn influencer after two double espressos. Someone in HR will build an agent that summarizes exit interviews, spots morale issues and accidentally creates a litigation starter pack. Someone in procurement will build an agent that compares suppliers, drafts negotiation emails and begins to feel suspiciously like a very cheap McKinsey associate (now there is a thought) with no expense note.

Everyone will be delighted. For about nine minutes. Then the CIO will ask who approved the thing. Silence. Then what data it can access. More silence, now with professional eye contact avoidance. Then what actions it can take. At that point someone from the business will say, “Well, technically, it only recommends.” That sentence should make all grown adults reach for a world war three grade helmet.

“Only recommends” is how many corporate systems already make decisions. The human sees the recommendation, assumes the machine did the thinking, checks nothing, clicks approve, and later explains to risk management that “the process worked as designed” (I have written that sentence, in slides, more than once). Which is one of those phrases that makes you understand why ancient civilizations occasionally threw things into volcanoes and disappeared without too much fanfare.

The agent problem is already visible. Gartner warned in April 2026 that the average global Fortune 500 enterprise could move from fewer than 15 agents in 2025 to more than 150,000 agents by 2028. One hundred and fifty thousand. Per company. That sounds less like transformation and more like a population explosion with API keys. I notice that the Wall Street Journal picked up the same scent this month: duplicated agents, cyber exposure, conflicting outputs, governance holes and rising compute costs, all arriving while departments build faster than central IT can catalog. A familiar corporate rhythm, really. First everyone wants freedom. Then everyone wants someone else to explain the consequences.

For years, business teams complained that IT was too slow. Often, with reason. IT had become the Department of No, Maybe Later, Please Fill In This Form, We Need Architecture Review (yes, I have put the capital letters there with a purpose). So the business found workarounds. First spreadsheets. Then SaaS. Then automation tools. Then shadow IT. Now shadow AI.

The workaround can read your contracts, write to your customers, browse your knowledge base, call other tools, generate content, trigger workflows and explain its reasoning in soothing English while being fundamentally wrong. Progress. Apparently.

Shadow IT was already annoying. Shadow AI comes with initiative on Red Bull.

A spreadsheet sits there and waits to be abused. An AI agent can go looking for trouble. It can call APIs, generate emails, book meetings, update records, search external websites, compare offers, rank candidates, draft supplier complaints at 2.17 in the morning and do all of it with the diplomatic instincts of a wounded badger.

People will trust it too fast because it speaks human. That is the dangerous little magic trick. Software used to look like software: forms, fields, buttons, menus, error messages written by people who clearly lost a bet. Agents are different. They behave like colleagues. They apologize. They summarize. They say “great question.” They explain. They flatter. They remember just enough to feel useful and forget just enough to ruin your week.

The interface is politeness, the backend is authority.

Every organization now needs to face one brutally simple question: when an AI agent acts, who owns the consequences? The user will point to the manager. The manager will point to the process owner. The process owner will point to IT. IT will point to the vendor. The vendor will point to the model provider. The model provider will point to the documentation nobody read. The consultant who built the prototype will already be on another transformation program with a better day rate and a cleaner badge.

Nobody wants that conversation because the answer is expensive. Governance sounds boring until the machine does something weird with money, personal data, pricing, compliance, supplier terms or a customer relationship. Then governance becomes fascinating. People discover audit trails the way medieval villagers discovered comets: too late, with fear, and usually while pointing.

The job replacement debate has become too narrow anyway. Yes, tasks will disappear. Roles will be compressed. Some people will be exposed as expensive forwarding systems with Outlook access (don’t shoot the messenger). Let us not insult reality. But the more immediate organizational shock sits elsewhere. AI agents redistribute action. That is where the power moves. Quietly. Through convenience. Through a workflow improvement. Through a proof of concept. Through a “temporary” workaround that survives longer than the executive who sponsored the official program. That redistribution is also where friction will show its teeth.

Convenience is undefeated.

The sales agent drafts the offer before pricing has reviewed the latest margin constraints. The procurement agent shortlists suppliers before sustainability has checked the small print. The finance agent flags anomalies using a model nobody can explain at quarter close. The HR agent produces a “sentiment insight” that becomes the ghost in a restructuring meeting. The legal agent summarizes a clause and misses the one sentence that matters, because of course it does. The marketing agent generates a campaign that sounds wonderful until someone realizes the claim is legally optimistic poetry.

Then everyone gathers in a Teams call and performs the ancient ritual of corporate innocence.

“We need to understand what happened.” No, you need to understand what you unleashed.

The public web is moving in the same direction. Cloudflare’s Matthew Prince warned at SXSW 2026 that AI bot traffic could exceed human traffic online by 2027, because agents can visit far more sites than humans while completing tasks. One human shopping for a camera might visit five websites. An agent might visit thousands. Charming. The internet is becoming a place where machines inspect pages written by machines so other machines can decide what humans should see. Very normal. Very healthy. Definitely what Tim Berners-Lee had in mind while not screaming into a pillow.

What happens on the public web will happen inside companies. Internal traffic will become agentic. Internal search will become agentic. Internal knowledge will become agentic. Internal approvals will become agentic. Eventually the company becomes a strange little biosphere where humans supervise agents that talk to other agents relying on documents written by previous agents based on meetings nobody remembers.

A bureaucracy, basically. Only faster. And this is where the CIO’s job becomes both more important and more thankless. The board wants acceleration. The business wants freedom. Legal wants control. Risk wants traceability. Security wants identity management. Finance wants cost discipline. Employees want tools that work before they retire. Vendors want everyone to believe the future can be purchased as a subscription tier called Enterprise Plus Platinum Quantum Something.

The CIO sits in the middle, holding the mop, the map and the fire extinguisher. Banning agents would be silly, and also useless. People will use them anyway. They always do. Ban something useful in a company and it immediately becomes more popular, like office gossip or decent coffee. The real work is treating agents as a workforce before they become one by accident.

That means identity. Every agent needs an owner. A named one. A human throat to choke, as the old infrastructure people would delicately put it after their third coffee. It means permissions that do not let agents wander through enterprise data like toddlers in a weapons museum. It means scope, expiry dates, escalation rules, logs, cost ceilings and kill switches that work outside PowerPoint.

Especially kill switches.

A real one. Not “we can always turn it off” whispered by someone who has never tried to decommission anything in a multinational company. If an agent can act, the organization must be able to stop it. Quickly. Cleanly. Without six committees, two vendors and a priest (I have, on at least one occasion, called the priest).

The cultural layer may be the hardest part. People need to understand when they are using an agent, when they are supervising one, when they are responsible for one, and when the agent is simply wrong with excellent grammar. That last category deserves its own training module, preferably with warning lights. Agents will make mistakes in ways humans find difficult to resist. A human junior employee makes a mistake and we question competence. An AI agent makes a mistake and half the room asks whether we prompted it correctly. The machine gets epistemological charity. The intern gets a performance review.

There is something deeply stupid in that, which means it will scale nicely. The enterprise agent explosion will not be clean. It will be messy, political, useful, dangerous, productive, embarrassing and occasionally brilliant. Some agents will save days of work. Some will expose rotten processes. Some will quietly become essential. Some will duplicate other agents. Some will generate beautiful nonsense. Some will be built for one urgent project and then live forever in a forgotten corner of the system, still running every Thursday at 03.00, like a tiny ghost with database access.

That is the work now: finding where these things act, who owns them, what they touch, what happens when they are wrong, and whether the company can still explain itself after the agents have been busy. A company that cannot explain how decisions are made has not transformed. It has become haunted.

And the ghosts are multiplying.